Memory System

Long-term memory with cognitive types, temporal decay, entity relationships, hybrid search, offline embeddings, session journaling, and smart reranking.

The desktop app includes a long-term memory system that lets agents remember important facts, preferences, and context across sessions. This means your agent learns from previous interactions and provides more relevant responses over time.

How It Works

The memory system uses a hybrid search approach combining two techniques:

- Semantic search -- Finds memories with similar meaning. Good for paraphrase matching ("user prefers dark mode" matches "what theme does the user like?").

- Keyword search -- Finds exact word matches. Good for code symbols, IDs, and specific terms.

Results from both searches are combined and ranked by:

- Relevance -- Memories that best match the meaning and keywords of your prompt

- Recency -- More recent memories rank higher

- Importance -- Explicitly important memories rank higher

- Diversity -- The system avoids showing near-duplicate results

- AI reranking (optional) -- A lightweight AI model picks the most task-relevant memories from the top candidates

Memory Types

The memory system categorizes memories by how they behave over time:

| Type | What It Stores | How Long It Lasts |

|---|---|---|

| Episodic | Interactions and events ("we discussed X yesterday") | Fades within days unless revisited |

| Semantic | Facts and preferences ("user prefers dark mode") | Persists for weeks |

| Procedural | Skills and workflows ("always run tests before committing") | Persists for months |

| Pinned | Anything you mark as permanent | Never fades |

The system automatically classifies new memories into the right type based on their content. You can also pin any memory to keep it permanently.

Memory Decay

Memories naturally fade over time, just like human memory. This keeps the system focused on what's relevant:

- Recent and frequently accessed memories stay strong

- Old and unused memories gradually fade

- Pinned memories never fade

- When a memory fades enough, it moves to an archive and stops appearing in search results

- Accessing a memory (through recall or search) refreshes it and prevents it from fading

Decay is off by default. Enable it in Settings > Memory > Advanced to activate this feature. You can also adjust how quickly each memory type fades.

Entity Relationships

The memory system can automatically extract and track relationships between people, projects, technologies, and concepts mentioned in your conversations:

- People -- Team members, contacts, stakeholders

- Projects -- Work initiatives, products, codebases

- Technologies -- Languages, frameworks, tools

- Organizations -- Companies, teams, departments

- Concepts -- Ideas, methodologies, patterns

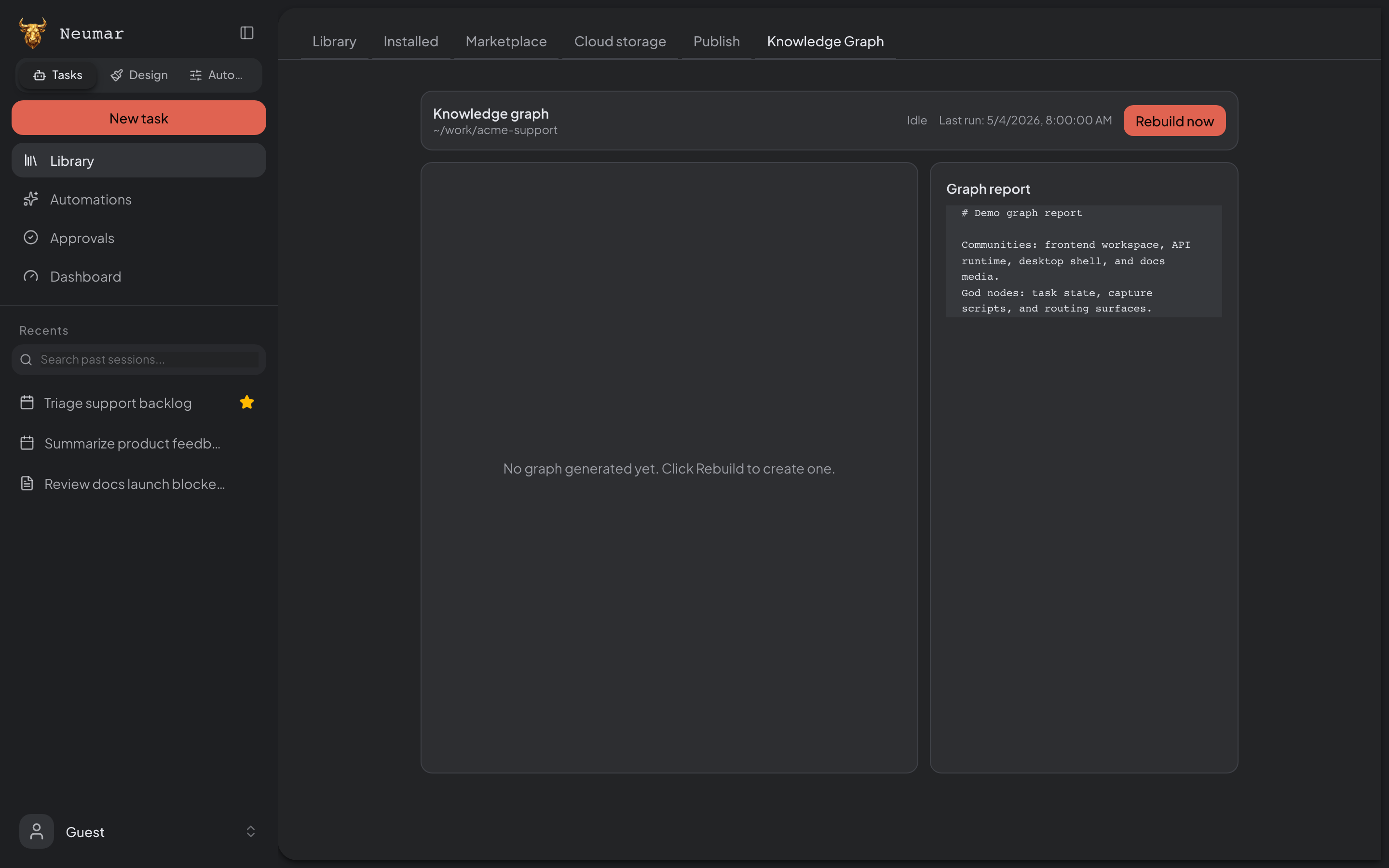

These entities form a knowledge graph that helps the agent understand connections. For example, it can know that "Alice manages the API project, which uses PostgreSQL."

Browse the entity graph in Settings > Memory > Explorer.

Entity extraction is off by default. Enable it in Settings > Memory > Advanced.

Memory Scoping

Memories can be scoped to different contexts:

| Scope | What It Means |

|---|---|

| Global | Available to all agents in all contexts |

| Profile | Only available when using a specific agent profile |

| Project | Only available within a specific project |

| Session | Only available within a single conversation |

This lets you keep memories organized. For example, coding preferences can be scoped to your developer profile, while project-specific details stay within their project.

Cross-Channel Isolation

When you interact with the agent through different channels — such as Slack, Discord, Telegram, or Lark — your memories are automatically kept separate for each channel. This means:

- Memories from your Slack conversations won't appear when you use Telegram

- Each workspace within a platform is isolated too — a Slack team's memories stay within that team

- You can use the

/forgetcommand in any channel to clear your memories for that specific channel only, without affecting memories from other channels

This ensures your context and privacy are maintained across platforms. No manual configuration is needed — isolation happens automatically based on where the message comes from.

Memory Lifecycle

Auto-Recall (Before Each Task)

When you start a new task, the system automatically:

- Takes your prompt and searches existing memories

- Returns the most relevant memories

- Formats them as context for the agent

- The agent sees these memories and uses them to inform its work

This happens transparently -- you don't need to manually tell the agent what to remember.

Auto-Capture (After Messages)

After you send messages, the system:

- Extracts potential facts from your messages

- Checks for duplicates to avoid storing the same fact twice

- Stores new facts as memories with appropriate categories

AI-Powered Capture (Optional)

At configurable intervals, a lightweight AI model can:

- Analyze the conversation so far

- Extract structured facts

- Store them with categories and importance scores

This produces higher-quality memories than automatic extraction alone.

Session Indexing (Optional)

When enabled, the system:

- Breaks completed task conversations into searchable segments

- Generates searchable representations for each segment

- Makes entire past conversations findable by meaning

Memory Categories

Memories are categorized for better organization:

| Category | Examples |

|---|---|

| Preference | "User prefers TypeScript over JavaScript" |

| Fact | "The project uses PostgreSQL 16" |

| Decision | "Team decided to use Tailwind CSS 4" |

| Entity | "Alice is the team lead for the API project" |

| Interaction | "Discussed database migration approach with user" |

| Tool Pattern | "Agent used Linear API to create issues in batch" |

| Correction | "User corrected: use yarn, not npm" |

| Workflow | "Deploy process: run tests, then build, then push to staging" |

| Other | Uncategorized facts |

Memory Tools

Agents have several memory tools available during execution:

| Tool | Description |

|---|---|

| Recall | Search memories by meaning |

| Keyword Search | Find memories by exact words (good for IDs, variable names) |

| Store | Save a new memory |

| Forget | Remove a specific memory (in channels, /forget clears all your channel memories) |

| List | List all memories with filtering |

| Pin / Unpin | Pin important memories so they never fade |

| Report Drift | Flag a memory as outdated when it no longer matches reality |

| Browse Entities | List extracted people, projects, technologies, and other entities |

| Explore Graph | View relationships between entities |

These tools are automatically available when memory is enabled.

Memory Visibility

Each memory can be set to one of two visibility levels:

- Private (default) -- Only you can see it. No restrictions on what you store.

- Team -- Shareable with team members. The system automatically blocks sensitive content like API keys, passwords, and tokens from being stored as team-visible memories.

Session Journal Mode

By default, the system captures individual memories as they happen. With Journal Mode enabled, the system takes a different approach:

- During your session, observations are collected into a running journal

- At the end of the session, the journal is reviewed by AI

- Only the most durable, important facts are extracted and saved as memories

- Temporary details (debugging steps, file paths, code snippets) are filtered out

This produces fewer but higher-quality memories, especially useful for long working sessions.

Staleness Detection

Memories can become outdated over time. The system handles this in two ways:

- Age warnings -- When memories are recalled, older memories include a note for the agent to verify the information before acting on it

- Drift reporting -- If the agent notices a memory contradicts the current state of your project, it can flag the memory as outdated. Flagged memories are deprioritized in future recalls.

You can also manually review and remove outdated memories in Settings > Memory.

Embedding Providers

Choose how text is processed for similarity search:

| Provider | Speed | Cost |

|---|---|---|

| Local (default) | Fast (~40-60ms) | Free |

| OpenAI | Network-dependent | API-priced |

| Gemini | Network-dependent | API-priced |

The local provider is recommended for most users:

- No API key required

- Works offline

- Supports 75 languages

- Model auto-downloads on first use (~340 MB)

Configuration

Configure memory in Settings > Memory:

| Setting | Description | Default |

|---|---|---|

| Enable Memory | Turn the memory system on/off | Off |

| Auto-Capture | Automatically extract facts from messages | On |

| Auto-Recall | Automatically search memories before tasks | On |

| Embedding Provider | Local, OpenAI, or Gemini | Local |

| AI Capture Interval | How often AI-powered extraction runs | Configurable |

| Session Indexing | Index completed conversations | Off |

| Memory Decay | Enable temporal memory fading | Off |

| Entity Extraction | Detect and track people, projects, technologies | Off |

| Consolidation | Periodically merge similar memories | Off |

| Max Recall Tokens | How much memory context the agent receives per task | 1500 |

| AI Reranking | Use AI to pick the most relevant memories (adds ~200ms) | Off |

| Journal Mode | Accumulate observations during session, distill at end | Off |

| Capture Guard | How strictly the system filters what to remember (Strict, Standard, Relaxed) | Standard |

Memory Management

- Reindex -- Rebuild search indexes (useful after changing providers)

- Export -- Download all memories as a file

- Import -- Load memories from a file

- Capacity limits -- Configurable maximum; oldest, least-used memories are removed first

Memory Consolidation

Over time, you may accumulate many similar memories. The consolidation feature periodically merges near-duplicate memories into cleaner, unified entries:

- Similar memories are detected automatically

- A summary is created that combines the key information

- Original memories are archived (not deleted)

- Consolidation respects memory scope and language -- it won't merge memories from different contexts

Consolidation is off by default. Enable it in Settings > Memory > Advanced.

Safety Features

The memory system includes several safety measures:

- Injection protection -- Memories are safely formatted before being shown to the agent

- Sensitive content blocking -- Team-visible memories cannot contain API keys, passwords, or secrets

- Staleness warnings -- Old memories include age-based warnings so the agent verifies before acting

- Capacity limits -- Prevents unbounded memory growth

- Deduplication -- Prevents storing near-identical facts

- Drift detection -- Agents can flag outdated memories to keep your memory store accurate

- Source tracking -- Each memory records how it was created (manual, auto-capture, tool, or API)

Learn More

- Agent System -- How agents use memory during execution

- Workspace Security -- Data protection and isolation