The most common framing of AI agent safety focuses on catastrophic failure modes: an agent that pursues a goal by means its designers never intended, causes irreversible harm, or operates in ways that humans cannot detect or correct. These concerns are real and important. But for practitioners building and deploying agent systems today, the more immediate challenge is a subtler one: how do you build an agent that is useful enough to act autonomously in routine situations, but reliably conservative enough to pause and seek guidance when it reaches the edge of what it should decide alone?

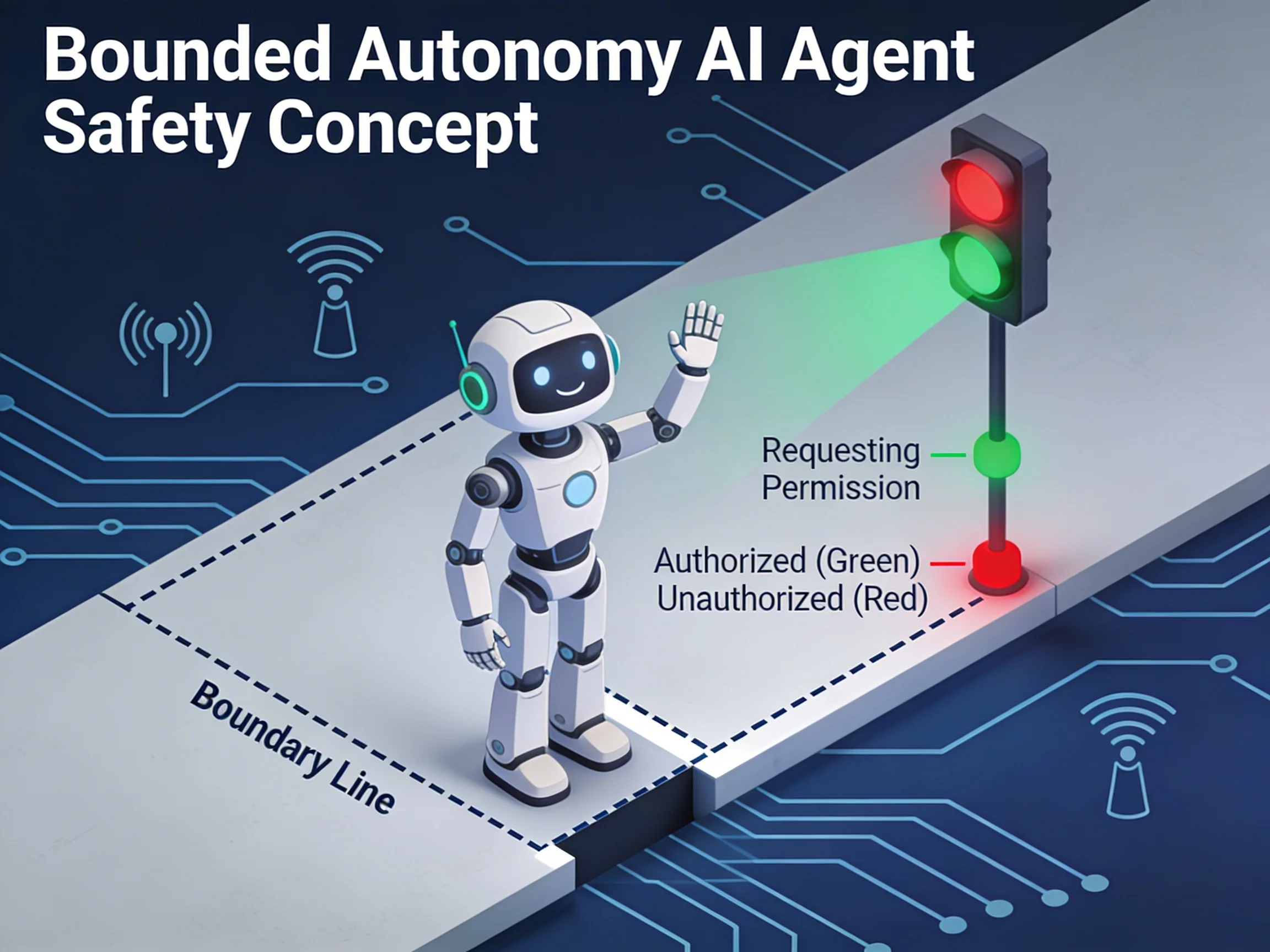

This is the problem of bounded autonomy, and getting it right requires design choices at every layer of the system.

What Bounded Autonomy Means in Practice

Bounded autonomy does not mean a timid agent that asks for permission at every step. It means an agent with explicit, well-designed constraints on the scope of its independent action — constraints that the agent itself understands and enforces, rather than constraints imposed by external rate-limiting or blocklists.

A bounded autonomous agent has clear answers to these questions:

- What actions am I authorized to take without explicit user approval?

- What conditions indicate that I have reached the edge of my authorization?

- When I reach that edge, how do I escalate appropriately rather than proceeding or failing silently?

- What actions are irreversible, and what approval requirements apply before I take them?

- How do I respond to an instruction to stop, regardless of where I am in an ongoing task?

The answers to these questions should be encoded in the agent's operational design, not left to implicit model behavior. Relying on the underlying language model to "use good judgment" about when to escalate is not a safety architecture. It is an absence of one.

Operational Bounds: Defining What the Agent Can Do Alone

The first design decision is defining the agent's operational scope — the domain within which it acts autonomously without requiring approval.

Effective scope definitions have three properties. They are concrete rather than abstract: "can modify files in the workspace directory" is concrete; "should use good judgment about file changes" is not. They are verifiable at runtime: the agent can check whether a proposed action falls within scope before taking it. And they are conservative by default: when uncertain whether an action falls within scope, the agent treats it as out-of-scope until explicitly authorized.

Practical scope categories for software development agents include:

Read-only actions: querying documentation, reading source files, inspecting logs, running tests that do not modify state. These are almost always safe to perform autonomously.

Reversible write actions: creating new files, writing to a configured workspace directory, generating draft content that requires review before use. These are generally safe with light logging and user notification.

Significant actions with consequences: committing to a repository, creating tickets, sending messages, deploying configuration changes. These require explicit authorization even if they fall within the agent's technical capability.

Irreversible or high-stakes actions: deleting data, making external API calls with billing implications, modifying production infrastructure. These should never occur without explicit, confirmed user approval.

The key insight is that authorization should be a function of consequence, not just capability. An agent with filesystem access is technically capable of deleting files. Having that capability does not mean it should exercise it autonomously.

Escalation Paths: What to Do at the Edge

An agent that reaches the edge of its operational scope and has no clear escalation path will do one of two things: proceed anyway (dangerous) or fail silently (unhelpful). Neither is acceptable.

Good escalation design gives the agent a clear protocol for surfacing uncertainty, obtaining clarification, and returning to a state from which it can continue. Escalation is not a failure — it is the system working correctly.

Escalation triggers should be defined explicitly:

Scope boundary detection: the agent has determined that the most effective next action falls outside its authorized scope.

Uncertainty threshold: the agent's confidence in the correct next action falls below a threshold that makes autonomous action unjustified.

Irreversibility detection: the agent has identified that the next action would be difficult or impossible to reverse, and no explicit authorization for irreversible actions in this context has been provided.

Novel context: the agent encounters a situation significantly different from any it has been given guidance for — a new type of external system, an unexpected error condition, a task that has morphed into something different from what was originally requested.

The escalation mechanism itself matters. An agent that surfaces an ambiguous wall of text and waits for any response is not providing an effective escalation. An agent that clearly states what it knows, what it is uncertain about, what options are available, and what specific information or authorization it needs to proceed is genuinely useful even when paused.

Interruptibility: The Underrated Requirement

Most safety discussions focus on preventing the wrong action. Less attention goes to ensuring that a running agent can be stopped cleanly at any point during execution.

Interruptibility is a property of the system's execution model, not just its decision-making logic. An agent running a long task must be designed so that:

Stopping is always possible. The agent should not take a sequence of actions that cannot be interrupted partway through without leaving the system in an inconsistent state. Multi-step operations should be structured so that partial execution is detectably incomplete and the agent or user can take appropriate cleanup action.

Stopping is communicated clearly. When a user interrupts an agent, they should receive a clear summary of what was completed, what was in progress, and what the state of any modified resources is. "Task stopped" with no further information leaves the user unable to evaluate what cleanup is needed.

Stopping at a checkpoint is preferred to stopping mid-action. Where possible, the agent should finish its current atomic action before honoring a stop request, rather than interrupting an action at an arbitrary point. A task that was interrupted between two related writes is harder to reason about than one that completed an action and was interrupted at the natural boundary between actions.

The agent can be resumed. When a task is interrupted, the information needed to resume it — the plan state, the completed steps, the in-progress context — should be preserved. Interruptible does not mean "restart from scratch."

Neumar's Two-Phase Execution as Bounded Autonomy

Neumar's two-phase plan-then-execute architecture is not primarily an agent capability pattern — it is a bounded autonomy pattern. The plan phase is where the agent makes its autonomy boundaries explicit and the user validates them before execution begins.

During the plan phase, the agent produces a structured decomposition of the task: what steps it intends to take, in what order, using what tools. The user can review this plan, modify it, remove steps that fall outside what they authorized, and ask the agent to reconsider its approach before a single real action is taken. This is the scope validation step made concrete.

During the execution phase, the plan serves as a contract. If the agent encounters a situation that requires an action not covered by the approved plan, it pauses and surfaces the decision rather than expanding its own scope. The plan is not a rigid script — adaptive re-planning is supported — but re-planning that adds significant new actions requires user acknowledgment.

The practical effect is that users maintain genuine oversight of what the agent is doing without needing to monitor every individual tool call. The plan-level review provides strategic visibility; execution-level transparency provides operational visibility for users who want it; escalation when the plan boundary is reached ensures that the agent's autonomy is always bounded by explicit user intent.

Building It Right

Bounded autonomy is not a single feature. It emerges from the combination of scope definition, escalation design, interruptibility, and execution transparency working together. Missing any one of these creates gaps that manifest as surprising behavior in production.

The organizations that deploy agent systems successfully are those that invest in this design work before deployment rather than discovering the gaps through incidents. An agent that reliably knows when to stop and ask is more valuable than one that confidently does the wrong thing. Designing for that property deliberately, rather than hoping the model figures it out, is what separates systems that earn trust from those that erode it.

Action Categories and Authorization Levels

| Category | Examples | Authorization Required | Risk Level |

|---|---|---|---|

| Read-only | Query docs, read source, inspect logs, run read-only tests | None (autonomous) | Low |

| Reversible writes | Create new files, write to workspace, generate drafts | Light logging + notification | Low-Medium |

| Significant actions | Git commits, create tickets, send messages, deploy config | Explicit authorization | Medium-High |

| Irreversible / high-stakes | Delete data, external API calls with billing, modify production | Confirmed user approval | High |